Let’s Change the Way

Machine Learning is Done

(Hint: Less is More)

Big Data is Overrated! Less is More!

The best way to get high performance Machine Learning models is to train on more data.

Or… is it really?

There is no question that Big Data helps in boosting a model’s performance. But make no mistake: the reality is that models get better when they are fed more valuable information, and adding more data in a random fashion is a very inefficient way to do that as not all data is created equal. Less is more!

Building a dataset that will truly enhance your model’s learning process is not only about gathering a sufficient quantity of high quality data; it’s also about ensuring that this data has value for your use case and will meaningfully impact training. Once you achieve this, your model will be capable of learning better, with a minimal amount of data, and hence faster and more cost-efficiently.

Why Less is More

MORE DATA

MEANS LONGER TIME

TO MARKET

Data scientists spend up to 80% of their time preparing training data.

More data means longer training times and reduced or delayed ROI.

DATA IS EXPENSIVE

TO PREPARE

AND PROCESS

Data processing isn’t cheap. Servers, data warehousing, and data labeling add up quickly. Relying on hardware-centric solutions just isn’t feasible long term.

BAD DATA IS THE ROOT CAUSE FOR MODEL UNDERPERFORMANCE

It doesn’t matter how good your models are if you’re training them on bad data. As datasets balloon in size, it’s often difficult to uncover what’s hurting performance.

Data Preparation on Autopilot

Let’s face it: Data Preparation is the least favorite part of the job according to the majority of data scientists. That’s because finding corrupted or missing data, evaluating vendors to get it annotated or choosing the right augmentations is tedious and time-consuming, to say the least. Imagine being able to put the full process on autopilot and have a technology-driven process select the right records, and prepare those records at the push of a button: that’s what you’ll get with the Alectio Platform.

Data-Centric AI on Demand

A growing number of ML experts agree that Data-Centric AI (the focus on data tuning rather than model-tuning) is the future of ML. But like anything involving Machine Learning, Data-Centric AI requires sophisticated workflows – and they’re not easy to build! With the Alectio Platform, you can start powering up your applications with Data-Centric AI, in just a few clicks.

How Alectio Works

Alectio is a machine learning optimization platform, currently available as a software development kit (SDK).

In practice, Alectio is a wrapper around your model. As you train your model, Alectio “listens” to what your model likes and what data it needs to become more accurate.

It understands what data is actually useful to your algorithms and what data isn’t.

The result is that you save on data labeling and spend less time and money training your models, all without trading down in performance.

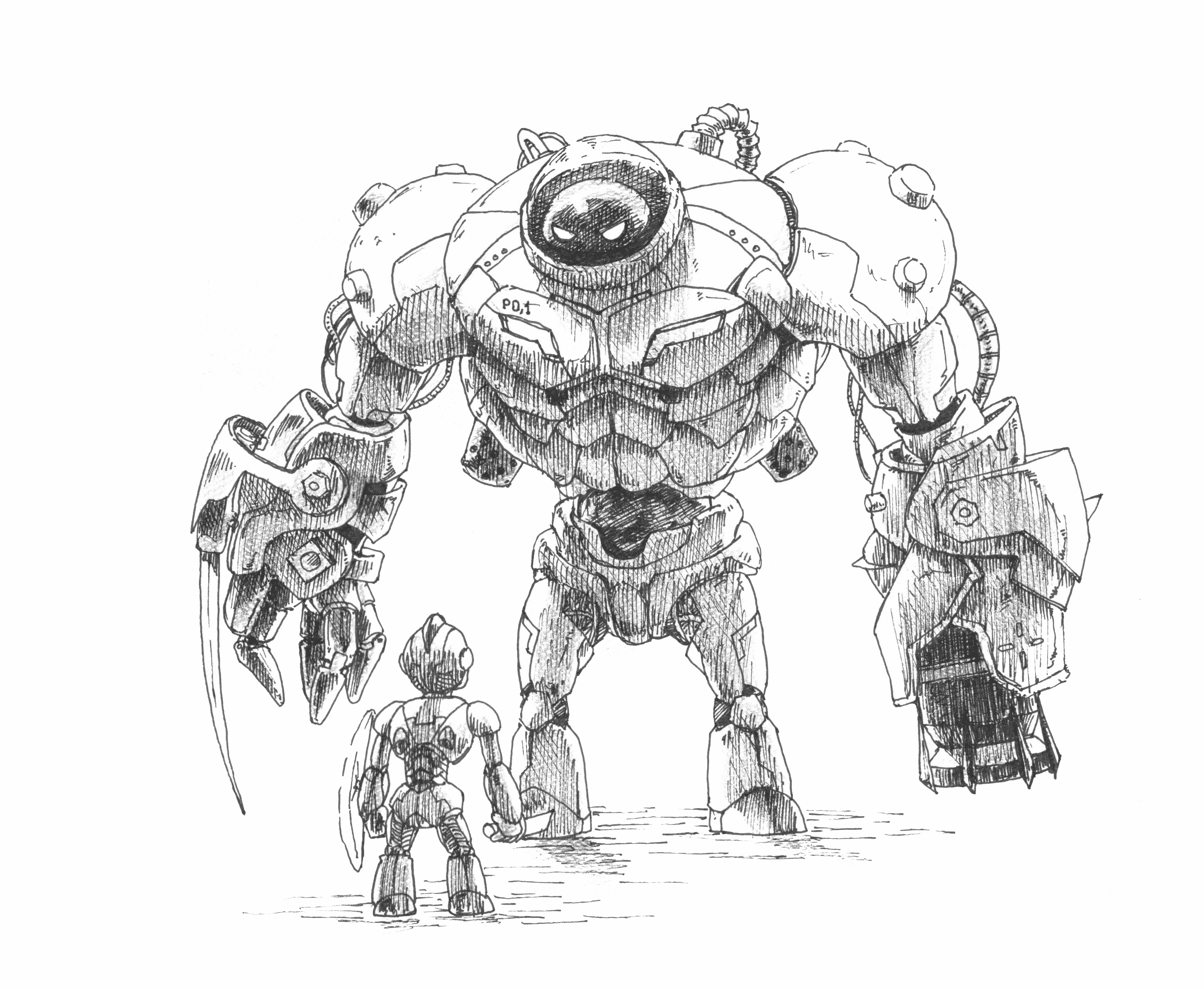

Learning from the Model’s “Body Language”

When a person learns something new, they will react to the new information they are being exposed to, and it shows through their body language. The fact they yawn might be a sign they are bored because the content is too easy, or because it’s too complex. The fact they scratch their head usually indicates that they are trying to summon more brain power to understand. Each one of those signs taken in isolation might not mean much, but combined together, they can be used by a teacher to build a curriculum just right for the student. Similarly, when models learn, things “happen” to them, and those “vitals” can be captured and analyzed to build the perfect dataset – without ever needing to invasively access the data.Support for any Use Case, any Data Type, any Framework

Our technology is designed to integrate seamlessly with any framework and any system. You can use it from your own computer, with any cloud provider and in conjunction with any Machine Learning platform. Because our algorithms are based on a Federated Meta Learning approach, it functions independently of the type of data and the type of model you are working with.

Read about Use Cases in your Industry (COMING SOON!)

Keep your Model and Data Private

The Alectio platform offers 3 privacy-preserving options to accommodate your preferences to let you connect your model to the platform. With each one of them, you remain the full owner of your model and are never required to give access to your model and your data. You are fully in control of the level of invasiveness of the process and choose what meta information you allow Alectio to access to curate your data.

START USING THE ALECTIO SDK

START USING ALECTIOLITE

START USING THE ALECTIO CLI

Understand your Model Better

In many industries, using a Deep Learning model represents a challenge because they are not explainable. The Alectio platform is a very unique explainability tool which allows data scientists to correlate specific information encoded in their models, and the specific records within the training data that this information originated from.

Our technology goes one step further compared to other ML Explainability framework because it helps understand the why rather than the how: where other would be able to tell you a loan was denied to a customer because of the zip code they live in, we are able to explain which records lead to the generalization that customers living in a specific area are more likely to default, giving you the tools to identify biases and act upon them. We’re making explainability insights actionable.

Learn how Alectio helps users fix biases.

Discover our Products

Data Curation

Alectio’s flagship offering helps you understand what data helps your model learn, what data is irrelevant, and what data is actively hurting your model’s performance. Our data curation solutions helps you drastically cut down on labeling costs, reduces model training (and retraining) times, and helps you uncover the data your model really needs to reach the performance you need.

Hybrid Labeling Solution

With Alectio, you don’t have to get tied into long term contracts with big providers. Instead, we combine our model-powered autolabeling solution with a marketplace full of expert, responsive, nimble labeling companies to get you the best labels for your data. That means combining the best of machine and human intelligence to get you faster turnaround on every row of data you label.

Data Collection

Alectio not only helps you find the best data to train your models with–we also can show you what data to collect next. Once you know the information your model needs to learn, the data it already understands, and the data that hurts its performance, you no longer have to collect everything–you just need to collect the right things.

Data Filtering

Alectio can also help with on-edge data collection by filtering and curate your data as you collect it. This can be especially useful for domains like autonomous vehicles, where large volumes of data are collected and stored in the cloud and where labeling costs are especially high. Instead of collecting everything, we’ll help you save only the most important information–in real time.

Comprehensive Feature List

Data Curation

Next Generation Active Learning

ML Pipeline for Active Learning

Human-in-the-Loop

Querying Strategies

Machine-in-the-Loop

Manual Curation

Hybrid querying strategies

Querying strategy stabilizer

Auto Data Curation

Saving on Compute

Early Stopping

Active Knowledge Transfer

Curation report

Diagnostics model issues

Training-Time

Explainability Framework

Advanced Tools

Stress Testing Tool

Smart Data Collection

Strategy Generator

Smart Orchestrator

for Synthetic Data Generation

Extensive querying strategy

library with cards

Labeling Marketplace

Labeling Partners

Autolabeling Models

Low-Latency Microjobs Workflow

Labeling Partner

Recommendation Engine

Resource Optimization Technology

Data Filtering

Data Filter Creation Workflow

Data Filter Marketplace

LabelOps Tools

Human-in-the-Loop Module

Labels Database

Labeling Report

Labeling Instructions Generator

Example Selection

Strength Measurement

Version Control